フォースと共にあれ

Apr. 23rd, 2026 11:26 pmListening to some of Alma Deutscher’s more recent stuff, the Breaking News Polka, which is very cute, and the Japanese Fantasia. I like that her work is so neoclassical, but I kind of wish she hadn’t taken this to the extent of using two of the most predictable Japanese traditional songs possible for her classical variations. (At least she didn't use "Kimigayo," which is as jingoistic as any other national anthem and more than some, although I do kind of like it musically.) I’ll admit that “Akatombo” is much more interesting in her hands than when I hear it signaling five o’clock (linking back to my other endeavor, it has lyrics by Miki Rofu, son of Midorikawa Kata, and music by Yamada Kosaku, brother of Tsuneko Gauntlett), but Clare Fischer did sakura sakura better. I’d just as soon have heard what Alma would do with something by Mr.Children or Dreams Come True.

Jiang Dunhao song of the post (because it’s my post and I can): 选择的归路, an older OST that I like for the way it shows off his low range and slides back and forth between minor and major; the first shift to major, around 00:35-36, is terrific.

We’re doing movie music in the orchestra right now (almost done, concert coming up this weekend, knock wood [knock woodwinds?]), and just in case not everybody was sure of what the Star Wars suite was expressing, one of the oboists sent everyone a heartfelt manifesto on the in-universe context of each section (the annihilating force of the Empire, Yoda lifting the shuttle out of the swamp, Luke feeling the Force, Leia summoning help and so on) just for reference. Nothing I haven’t known about since I was fifteen, and I do think about it when we’re playing; I find Yoda’s theme some of John Williams’ best work, the main theme with the little clarinet interjections in particular always kind of makes me cry, around 1:14 to 1:24 here; but I was pleased to find the oboist signing his email off appropriately with “May the Force be with you!” (If we’d only pushed the concert date off by just a week, it could’ve been on May the Fourth…)

New class of Japanese learners at the weird high school, a big one this year with eighteen kids. Mostly Chinese (including one from Hong Kong), as well as two lively Nepali boys and one girl each from Thailand and the Philippines. Last year’s class featured two tall, slim, incredibly poised idol-style princesses; this year they’re all more typical fifteen-year-olds, personalities not yet coming out in full at their new school, although it’s fascinating to watch the subgroups forming already. Several speak good English and have to be told NOT to speak English with me when I volunteer in class, they’re here to learn Japanese! They have so far learned to introduce themselves with regard to name, age, and nationality, the last a little complicated; the Thai and Filipina girls are both half-Japanese, I think, and so is at least one of the Chinese kids, and since they’re all still young enough to hold dual nationality, they have some choices to make when it comes to this elementary piece of language practice.

Work: Somewhere in one of the Janet Neel mysteries, Francesca Wilson remarks “Fraud gets in everywhere once you have it, like moth,” and I have found that this also applies to mismanagement/incompetence at work—like, there is this one long project in which everything that could go wrong has gone wrong (not, for a nice change, any of it my fault to speak of). I think the root of all evil was the client demanding extremely unrealistic deadlines, and then the sales guys promising to meet them without bothering to consult with the people actually doing the work (sorry, I have a long-standing and permanent grudge against the people in charge of sales), but even after that there was a remarkable failure to do any of the elementary checking (spelling! glossary words!), agree on basic conventions, or do anything resembling version control. Like wrestling a plate of spaghetti, but it’s not like the spaghetti fork hasn’t long since been invented.

A couple of very silly things from long ago that came to mind recently, one talking with the brass players at orchestra rehearsal: way back in high school I had a friend who was a trombonist in the band, and who would bring her instrument to school on the school bus, as one did. One of the little kids looked at her getting on the bus one day with this big black case over her shoulder, and called out “Hey, look! Sarah plays the bazooka!”

Also, since we’re into baseball season now (a mixed bag so far), I was reminded of Deanna Rubin’s baseball musical, which remains a delight. (I should look Deanna up again—we hung out a few times many years ago and she was lovely.)

This is just plain bragging and I’ll put it under a cut: ( in brief )

Photos: My bassoon teacher’s magnificent cat, trains within trains, Shanghai-style fried dumplings (apparently you can tell because they’re folded like little paper hats, and yes they were as tasty as they look), and assorted flowers.

Be safe and well.

Big Day, Big Wrecks

Apr. 23rd, 2026 01:00 pmBy popular demand, here are a few more Inspiration vs Perspiration Wedding Wrecks. And shame on you all for finding them so funny.

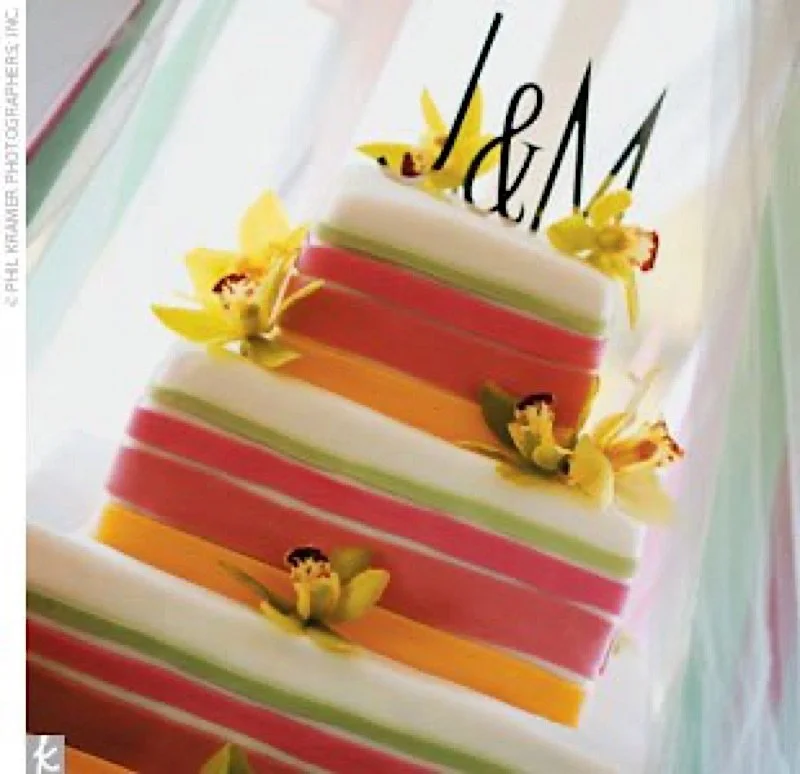

What was ordered:

What was received:

Fortunately Christine C. reports the the bride and family had a great sense of humor about this Wreck, and even dubbed it the "bamPOO" cake. Heheh.

Ordered:

And received:

Uh, since the cake itself leaves me speechless, I'm going to comment on the background. Hey Jessica M., is that Chewbacca through the window? I mean, given the Han Solo & Leia topper, I was wondering if Chewie was the ring-bearer or something.

And lastly, ordered:

Aaaand received:

You have to wonder if that swipe was a result of the bride fainting at the sight of it, don't you? Still, I guess she should count her blessings: imagine if the wreckerator had been asked to write something on it!

*****

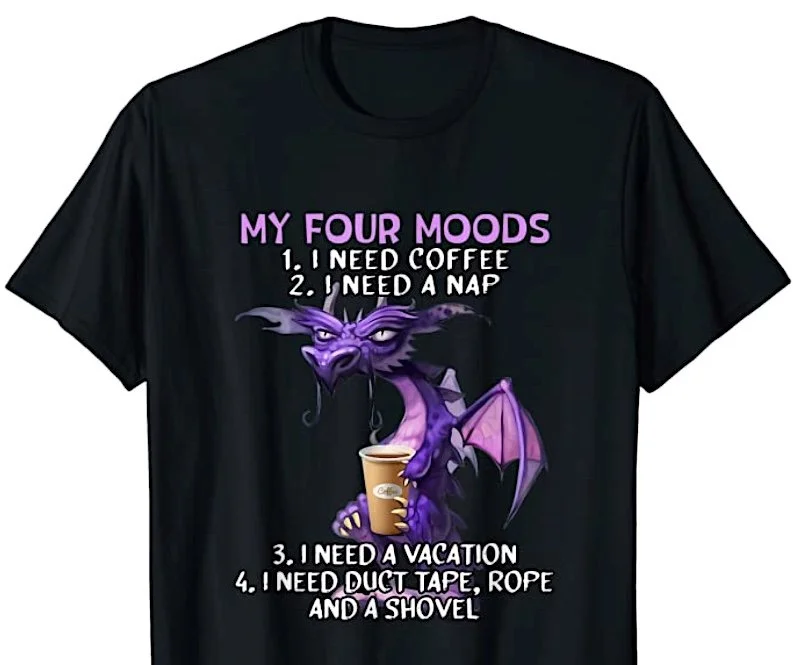

P.S. Here's a giggle for my coffee-loving friends:

:D

It comes in both Men's & Women's cuts, plus a bunch more colors.

*****

And from my other blog, Epbot:

A novel approach to proton-boron 11 fusion

Apr. 23rd, 2026 09:01 amA novel approach to proton-boron 11 fusion.

Use of Weapons by Iain M. Banks

Apr. 23rd, 2026 08:46 am

What transformed Cheradenine Zakalwe into the superlative Special Circumstances asset he is today?

Use of Weapons by Iain M. Banks

side-effect of shuffling playlists

Apr. 23rd, 2026 01:15 pmSo basically these are all the same song, right:

- Paul Simon - Fifty Ways to Leave Your Lover

- Avril Lavigne - Girlfriend

- Robyn - Call Your Girlfriend

What else am I missing that goes on this list? And are there any equivalents about boyfriends? The only thing that came to mind was the Dandy Warhols - Bohemian Like You, which isn't quite the same vibe.

[dreamwidth/dreamwidth] b8e8de: SearchCopier: rewrite as direct port of SphinxCopi...

Apr. 23rd, 2026 01:36 amBranch: refs/heads/main Home: https://github.com/dreamwidth/dreamwidth Commit: b8e8ded3b31d3871f41a68cf0dc160db5ce18d94 https://github.com/dreamwidth/dreamwidth/commit/b8e8ded3b31d3871f41a68cf0dc160db5ce18d94 Author: Mark Smith mark@dreamwidth.org Date: 2026-04-23 (Thu, 23 Apr 2026)

Changed paths: M bin/search-tool M cgi-bin/DW/Task/SearchCopier.pm

Log Message:

SearchCopier: rewrite as direct port of SphinxCopier patterns

The prior SearchCopier took its own shape — bulk selectall_arrayref, ad-hoc chunking, per-doc log lines, wholesale DELETE-then-rebuild per journal — and missed practices SphinxCopier has been using in prod for years. Rewrite it as a near-mechanical port of SphinxCopier, with Manticore-specific deviations only where Manticore's semantics require them.

What's now matched with SphinxCopier:

- work() dispatch structure, arg shape (jitemid, jtalkid, jitemids, jtalkids, full recopy), and log messages at INFO

- sphinx_db()/manticore_db() opens the connection with a SET NAMES 'utf8' and errstr check

- logcroak() after every query against the cluster DB and the search DB, so failures fail the task loudly and the queue retries

- Full-recopy entry pass diffs dw1 vs log2 and batch-deletes missing jitemids; does NOT wipe the whole journal up front. Search stays available for the journal during the recopy.

- Full-recopy comment pass has the "short path" for

[dreamwidth/dreamwidth] 39a949: SearchCopier: stream from cluster DBs, match Sphin...

Apr. 23rd, 2026 01:03 amBranch: refs/heads/main Home: https://github.com/dreamwidth/dreamwidth Commit: 39a9497745cdab9f36c8d8cd669f7457a3595fd6 https://github.com/dreamwidth/dreamwidth/commit/39a9497745cdab9f36c8d8cd669f7457a3595fd6 Author: Mark Smith mark@dreamwidth.org Date: 2026-04-23 (Thu, 23 Apr 2026)

Changed paths: M cgi-bin/DW/Task/SearchCopier.pm

Log Message:

SearchCopier: stream from cluster DBs, match SphinxCopier logging

importfull was doing selectall_arrayref on both log2+logtext2 and talk2+talktext2 for the journal, which loads every row into perl memory before doing anything. Workers were OOMing on real-world accounts.

Switched both loops to prepare + execute + fetchrow_hashref with mysql_use_result=1 so DBD::mysql actually streams rather than buffering the full result set client-side. Also merged the old "fetch metadata, then fetch text in batches of 1000" comment path into a single talk2 + talktext2 join, since we're streaming now. Working memory is bounded at one row at a time plus the %entry_bits map (jitemid -> bits arrayref) kept around for comment security inheritance.

Also upgraded work()'s logging to match SphinxCopier's verbosity so

it's actually possible to tell who a job is for from the logs:

"Search copier started for [Unknown site tag](

Co-Authored-By: Claude Opus 4.7 (1M context) noreply@anthropic.com

To unsubscribe from these emails, change your notification settings at https://github.com/dreamwidth/dreamwidth/settings/notifications

Just One Thing (23 April 2026)

Apr. 23rd, 2026 08:21 amComment with Just One Thing you've accomplished in the last 24 hours or so. It doesn't have to be a hard thing, or even a thing that you think is particularly awesome. Just a thing that you did.

Feel free to share more than one thing if you're feeling particularly accomplished! Extra credit: find someone in the comments and give them props for what they achieved!

Nothing is too big, too small, too strange or too cryptic. And in case you'd rather do this in private, anonymous comments are screened. I will only unscreen if you ask me to.

Go!

History

Apr. 23rd, 2026 02:19 amThe Great Oxidation Event marks the point when oxygen first began to accumulate in Earth’s atmosphere and shallow oceans, permanently altering the course of life on our planet.

I was really pleased to find this description, since most sources ignore Earth's first mass extinction.

2026/060: Titanium Noir — Nick Harkaway

Apr. 23rd, 2026 07:40 am“You’re the shock absorber. From the Titans’ point of view, you stop the masses from realising the extent of their subjugation. You relieve them of the need to exercise raw financial and political power in the protection of their interests where those interests collide with the law. But ... you also protect ordinary humans from the consequences of that subjugation as best you can. Yours is an equivocal profession. But I hear you’re not entirely an asshole.” [loc. 2879]

Cal Sounder, consultant detective, is hired to investigate the murder of a reclusive scientist, Roddy Tebbit, who died in his own home and apparently by his own hand. Complicating the matter is the fact that Tebbit was a Titan -- a recipient of a genetic therapy called T7 (possibly something to do with telomeres) which reverses ageing, increases muscle and bone density, and incidentally makes Titans literally larger than life. On the downside, it's extremely expensive; it affects memory; and the process can be very painful.

( Read more... )Community Thursdays

Apr. 23rd, 2026 12:08 am* Commented on "Gratitude Journaling" in

* Commented on "just create - beard edition" in

* Posted "Books" to

[dreamwidth/dreamwidth] 776ac7: Add DW::Task::SearchCopier path and bin/search-tool

Apr. 22nd, 2026 07:10 pmBranch: refs/heads/main Home: https://github.com/dreamwidth/dreamwidth Commit: 776ac7cd8c2185b53beb87c4c460205d19f00be3 https://github.com/dreamwidth/dreamwidth/commit/776ac7cd8c2185b53beb87c4c460205d19f00be3 Author: Mark Smith mark@dreamwidth.org Date: 2026-04-23 (Thu, 23 Apr 2026)

Changed paths: A .github/workflows/tasks/worker-dw-search-copier-service.json M .github/workflows/worker22-deploy.yml R bin/schedule-copier-jobs A bin/search-tool A bin/worker/dw-search-copier A cgi-bin/DW/Task/SearchCopier.pm M cgi-bin/LJ/DB.pm M config/workers.json M etc/workers.conf

Log Message:

Add DW::Task::SearchCopier path and bin/search-tool

Stand up the new manticore-rt write path side-by-side with the legacy sphinx-copier. Nothing in production dispatches to it yet — only bin/search-tool's import-* subcommands. The two paths can run in parallel through cutover.

cgi-bin/DW/Task/SearchCopier.pm: new task class. Auto-routes to its own SQS queue (dw-prod-dw-task-searchcopier) via class-name derivation. Mirrors SphinxCopier's argument shape (full recopy, single jitemid, single jtalkid) and its security_bits / state / text-decode handling, so the search worker's filter contract stays intact when we eventually flip readers over. Tracks per-run stats (entries/comments/deletes ok/err); summary log is debug on clean success, warn when there are errors. Independent 24h memcache throttle on full recopies (separate key from sphinx-copier's).

bin/worker/dw-search-copier: 36-line runner cloned from dw-sphinx-copier; pulls from the new queue, calls work().

etc/workers.conf: add dw-search-copier: 1 so worker-manager spawns it.

bin/search-tool: CLI helper for the migration. Subcommands import-user, import-all, import-support, search, show, delete, count, flush. import-user delegates to SearchCopier->work() so the CLI and the worker share one code path. import-all replaces the retired bin/schedule-copier-jobs (deleted in this commit).

config/workers.json: register dw-search-copier as an ECS Fargate worker (256 cpu / 512 mb, spot, target 30% cpu, scale 1-10). The .github/workflows/ files are the auto-generated CI artifacts from running update-workflows.py.

LJ/DB.pm: incidental tidyall whitespace-only fixup.

Co-Authored-By: Claude Opus 4.7 (1M context) noreply@anthropic.com

To unsubscribe from these emails, change your notification settings at https://github.com/dreamwidth/dreamwidth/settings/notifications

Poem: "No Faster or Firmer Friendships"

Apr. 22nd, 2026 08:35 pmWarning: This poem touches on family tragedies and earthquake aftermath, but the current context is safe and supportive.

This microfunded poem is being posted one verse at a time, as donations come in to cover them. The rate is $0.25/line, so $5 will reveal 20 new lines, and so forth. There is a permanent donation button on my profile page, or you can contact me for other arrangements. You can also ask me about the number of lines per verse, if you want to fund a certain number of verses. So far sponsors include:

515 lines, Buy It Now = $129

Amount donated = $34

Verses posted = 38 of 146

Amount remaining to fund fully = $95

Amount needed to fund next verse = $1.25

Amount needed to fund the verse after that = $0.50

( Read more... )